RESEARCH

Adversarial Patch Training

This is work led bySukrut Rao.

Quick links: Paper | Code | Project Page

Abstract

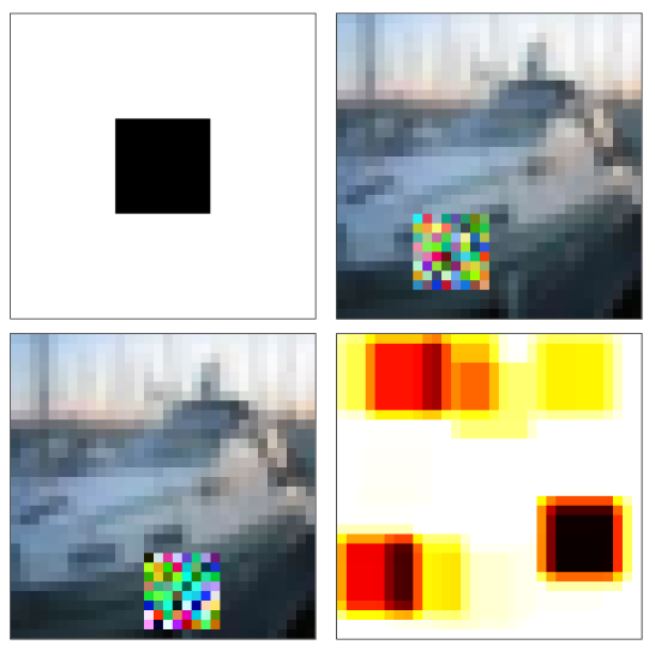

Deep neural networks have been shown to be susceptible to adversarial examples -- small, imperceptible changes constructed to cause mis-classification in otherwise highly accurate image classifiers. As a practical alternative, recent work proposed so-called adversarial patches: clearly visible, but adversarially crafted rectangular patches in images. These patches can easily be printed and applied in the physical world. While defenses against imperceptible adversarial examples have been studied extensively, robustness against adversarial patches is poorly understood. In this work, we first devise a practical approach to obtain adversarial patches while actively optimizing their location within the image. Then, we apply adversarial training on these location-optimized adversarial patches and demonstrate significantly improved robustness on CIFAR10 and GTSRB. Additionally, in contrast to adversarial training on imperceptible adversarial examples, our adversarial patch training does not reduce accuracy.

Paper

The paper is available on ArXiv:

@article{Rao2020ARXIV,

author = {Sukrut Rao and David Stutz and Bernt Schiele},

title = {Adversarial Training against Location-Optimized Adversarial Patches},

journal = {CoRR},

volume = {abs/2005.02313},

year = {2020}

}

Code

The code is available on GitHub:

Code on GitHubNews & Updates

August 4, 2020. The code is now available on GitHub.

May 30, 2020. The paper is accepted at CV-COPS 2020.

May 6, 2020. The paper is available on ArXiv.